As legislators at all levels of government throughout the United States cut programs and budgets, all 50 states, local areas, urban areas, territories, and tribes (hereinafter, “jurisdictions”) are becoming increasingly aware of their need to justify grant expenditures and articulate progress, efficiency, and improvements resulting from those grants in a defensible and consistent manner. Today, to ensure continued grant program support, jurisdictions must not only show grant-funded accomplishments but also provide a plausible and detailed road map for achieving program goals and objectives.

CNA, a not-for-profit company serving all levels of government, has created an aptly named Grant Effectiveness Model (GEM) to help all jurisdictions meet their respective goals. This logic model is basically an evaluation plan that helps jurisdictions determine the effectiveness of grants by using the performance measurement and evaluation terminology of the U.S. Government Accountability Office (GAO) – and borrows some additional performance measurement techniques and tools from the U.S. Department of Energy.

GEM provides a project-centered, performance-focused, outcome-based approach that captures the impact of grant dollars by identifying, measuring, and assessing both the purchases made with grant dollars and the related returns on those investments. Although GEM was originally designed as a primary working tool, and solution, for the grant evaluation and feedback problems of the Department of Homeland Security’s Federal Emergency Management Agency (FEMA), its generic structure may be applied to any of the U.S. government’s grant programs.

The Grant Effectiveness Model (GEM): A Four-Step Process

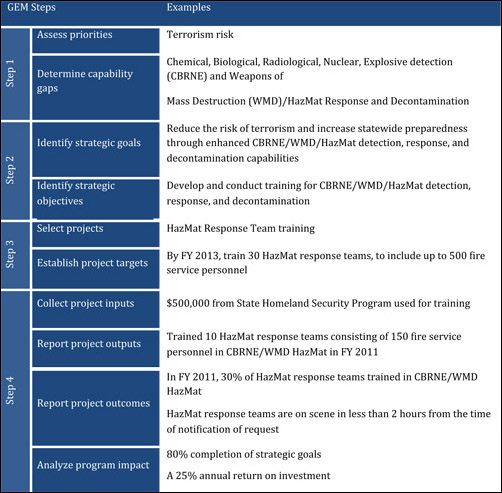

GEM is basically a four-step process that: (1) links strategic priorities to required capabilities; (2) identifies strategic goals and objectives; (3) provides guidance on selecting projects and establishing project targets; and (4) demonstrates the importance of collecting, analyzing, and reporting project inputs, outputs, outcomes, and program impact.

GEM does all this (and more) by facilitating the tracking of outputs, outcomes, and impact of grants to assess progress – and can also be used to develop strategies or improve/change program direction. (The GEM components shown in the graphic accompanying this article are closely aligned with the preparedness grants of FEMA’s State Homeland Security Program, serving here as an exemplar.)

Step 1 – Assess Priorities and Gaps

Step 1 has two critical components: assessing priorities; and determining capability gaps. A jurisdiction should first conduct a risk assessment in an effort to prioritize risk/threats. A capability assessment and gap analysis identifying the deficiencies in capabilities associated with highly ranked risks are used to influence grant funding decisions.

Step 2 – Identify Goals and Objectives

The second step of the GEM process, identifying strategic goals and objectives, is essential to measuring a jurisdiction’s progress. Jurisdictions identify goals that achieve their vision and long-term focus and identify the capabilities that support each goal. Strategic objectives reflect a jurisdiction’s priorities and are thus a tangible, measurable target against which actual achievement can be compared. Each strategic goal encompasses at least one objective that can be used in tracking progress toward achieving goals. Moreover, each objective identifies a specific outcome. When these objectives are met, they achieve the jurisdiction’s strategic purpose, vision, and goals.

Step 3 – Establish Projects and Project Targets

Step 3 involves selecting projects and establishing project targets. Projects enhance and sustain capabilities and achieve outcomes that are aligned with strategic doctrine. For that reason, jurisdictions should select projects that not only achieve strategic goals and objectives but also build and/or sustain the capabilities needed to meet strategic priorities.

Project targets help identify and provide a standard for comparing what is to be achieved with what has been accomplished. Project targets can reveal whether progress is satisfactory and whether the activities postulated are important and/or relevant. They also identify a specific output and a date for when a specific target will be achieved.

Project targets should: (a) be discrete – that is, represent a single project, rather than a group of projects or an initiative; and (b) support achievement of the strategic goals and reduction of capability gaps. To determine an accurate assessment of the goals that must be attained, project targets must be specific, measurable, realistic, attainable, and expressed in simple terms (preferably using specific numbers and units of measure).

Step 4 – Collect, Analyze, and Report

The final step involves collecting, analyzing, and reporting project and program inputs, outputs, outcomes, and impacts in order to improve the data quality without burdening grantees with additional reporting requirements. Following are brief summaries of why and how each of those abstractions contributes to overall success of the project:

Inputs are resources used to achieve project targets. These include the funding source (specific grant program), any cost-sharing data (source and funding amount) related to the project and, more important, any funding data related to achieving strategic goals and objectives.

Outputs are the goods and/or services produced as a result of the grant funding provided. This information is needed to determine whether the investment is in fact achieving project targets or strategic goals and objectives, and/or whether it has resulted in a measurable improvement. In GEM, grantees, at a minimum, report project outputs annually, focusing on quantitative results so that subsequent descriptions of completed activities can be compared with original project targets. This allows for the continuous monitoring of grant accomplishments and progress toward strategic goals and objectives. Reported data must be consistent, complete, and accurate. GEM provides a reliable method that can be used as a framework for any data collection system.

Outcomes are the end results that indicate achievement of the strategic goals and objectives. The Government Performance and Results Act of 1993, which is monitored and supervised by the Office of Management and Budget (OMB), states clearly that outcome measurement “cannot be done until the results expected from a [project] have been first defined … [or] … until a [project of fixed duration] is completed, or until a [project which is continuing indefinitely] has reached a point of maturity or steady-state operations.” GEM can capture incremental progress toward the strategic goal if the results expected are observed during the execution of the grant. This is sometimes referred to as an intermediate outcome. A combination of data collection sources – e.g., surveys, interviews, focus groups, and performance monitoring – is also used to obtain outputs and outcomes.

Impact, according to the Office of Management and Budget, determines “the direct or indirect effects or consequences resulting from achieving program goals … [and] is generally done through special comparison-type studies, and not simply by using data regularly collected through program information systems.” GEM assists jurisdictions in measuring the direct impact of preparedness grants in meeting a jurisdiction’s vision and goals and its progress toward achieving preparedness. When grantees generate clear and measurable goals and objectives, GEM can be used to capture completion. The GEM dashboard (a CNA visualization tool that helps grantees and decision-makers make better, more informed decisions) shows a jurisdiction’s progress toward fulfilling its vision and meeting its strategic goals. Here it should be noted that this type of impact analysis is traditionally conducted on mature programs with quantifiable strategic goals and objectives.

To briefly summarize: GEM is a systematic process for evaluating grants, improving program quality, and demonstrating a jurisdiction’s success in meeting its strategic priorities. More importantly, GEM helps jurisdictions communicate accomplishments to grantors.

Dianne L. Thorpe

Dr. Dianne L. Thorpe is a project director/research analyst on the Safety and Security Team at CNA. For the past decade, she has conducted research for DHS FEMA, the U.S. Navy, and the U.S. Marine Corps, as well as regional government entities specializing in homeland security, policy, training, program effectiveness, performance measurements, preparedness, and grants. She also served as the chemical program manager in DHS’s Science and Technology Directorate, Homeland Security Advanced Research Projects Agency – managing programs to accelerate the prototyping and deployment of technologies to reduce homeland vulnerabilities. She may be contacted at thorped@cna.org.

- This author does not have any more posts.

Kristen N. Koch

Dr. Kristen N. Koch is a CNA research analyst. She has conducted analysis related to homeland security, specializing in planning, capabilities, and grant program investment analysis for: DHS FEMA, Grant Programs Directorate (GPD) and National Preparedness Directorate (NPD); the Department of Health and Human Services (HHS); and regional government entities. Over the past two years, she has conducted analysis for FEMA/GPD’s Cost to Capability Initiative and FEMA/NPD’s Comprehensive Assessment System (CAS). She also has supported the Quadrennial Homeland Security Review. She may be contacted at kochk@cna.org.

- This author does not have any more posts.